When algorithms join battlefield: US military’s AI dilemma [ANALYSIS]

![When algorithms join battlefield: US military’s AI dilemma [ANALYSIS]](https://www.azernews.az/media/2026/03/11/w1200h630fitcoverq80_1.jpg)

The Pentagon is using tools from Anthropic and OpenAI, major artificial intelligence companies, to make military decisions in Iran, and guiding decisions could cost lives.

Reports from the Wall Street Journal and Axios indicate that Claude was utilised during the large-scale joint US-Israel bombardment of Iran that began on Saturday. This situation highlights the complexities involved in the US military's efforts to withdraw advanced AI tools from its operations, especially when the technology is already deeply integrated into its missions.

According to the Journal, the US military command utilised these AI tools for intelligence purposes, assistance in target selection, and conducting battlefield simulations.

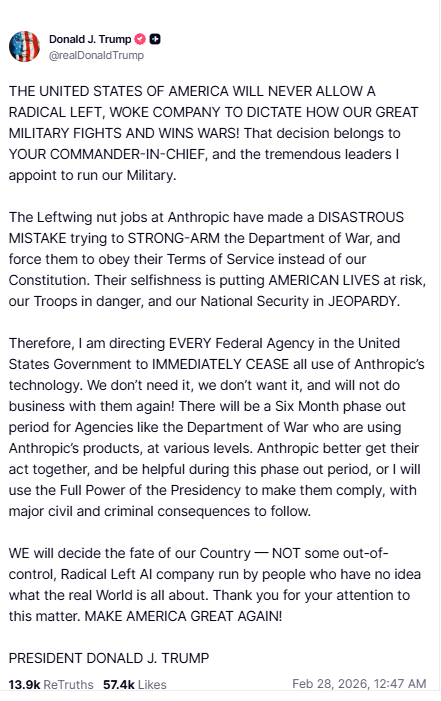

On Friday, just hours before the attack on Iran commenced, former President Trump ordered all federal agencies to cease using Claude immediately. He criticised Anthropic on Truth Social, labelling it a “Radical Left AI company run by people who have no idea what the real world is all about.”

Following the rift with Anthropic, rival company OpenAI stepped in. Sam Altman, CEO of OpenAI, announced that he had reached an agreement with the Pentagon for the use of the company’s tools, including ChatGPT, within its classified network.

Apparently, this is not the first time we see reports regarding the usage of AI in 'US conflicts'.

Tensions escalated after the US military's use of Claude in a raid to capture Venezuela's president, Nicolás Maduro, in January. Anthropic objected, citing its terms of use that prohibit applications of Claude for violent purposes, weapon development, or surveillance.

Since that incident, relations between Trump, the Pentagon, and the AI company have deteriorated. In a lengthy post on X on Friday, Defence Secretary Pete Hegseth accused Anthropic of “arrogance and betrayal," asserting that “America’s warfighters will never be held hostage by the ideological whims of Big Tech.” He demanded full and unrestricted access to all of Anthropic’s AI models for every lawful purpose.

However, Hegseth acknowledged the challenge of quickly detaching military systems from the AI tool, given its widespread usage. He mentioned that Anthropic would continue providing services for “no more than six months” to ensure a smooth transition to a more suitable and patriotic service.

But how possible is this? Can AI really be used in wars?

Claude's role in the war involved analysing satellite images and intercepted communications. He ranked and confirmed high-value targets, simulated strike outcomes before they occurred, and tracked enemy positions in real time.

Several AI models are currently being utilized in military applications, including GROK, Google’s Gemini, and various GPT models from OpenAI. The Pentagon secured a contract with OpenAI shortly after the fallout with Anthropic, specifically allowing the use of GPT models for classified military purposes by February 2026. Gemini, on the other hand, has been designated for unclassified use, with ongoing negotiations for access. The Pentagon has also awarded contracts valued up to $200 million in July 2025 through the Chief Digital and Artificial Intelligence Office (CDAO) to enhance frontier AI in warfare and planning.

It should be noted that these models are LLMs - Large Language Models, which are types of AI trained on vast amounts of data to understand, generate, and analyze human-like text.

This encompasses a focus on large language models, agentic workflows, and both classified and unclassified deployments for national security and intelligence purposes. Despite these advancements, OpenAI CEO Sam Altman has expressed concerns regarding the readiness of AI systems for making warfighting decisions, emphasizing during the same week he signed a deal with the Pentagon that AI should not be entrusted with such critical choices.

The future may see the emergence of autonomous weapons capable of selecting and striking targets without human approval, AI-powered humanoid soldiers on the battlefield, drone swarms that adapt in real-time, extensive surveillance of both enemy and civilian populations, and predictive warfare that anticipates attacks before they occur.

"In reality, the integration of AI into warfare did not begin with the recent crisis", points out AI and cybersecurity expert Ryan Colloway to AzerNEWS.

"The Pentagon has spent years developing algorithmic warfare programs designed to enhance battlefield awareness and accelerate decision-making. One of the earliest and most notable initiatives was Project Maven, launched to enable machine learning systems to analyse drone footage and automatically identify objects such as vehicles, weapons systems, and infrastructure. The project marked a turning point in how artificial intelligence could augment military intelligence operations. Israel also uses an AI system. Such as, “Lavender” – identifies suspected militants from data analysis; The Gospel – suggests strike targets."

Yet the growing dependence on private-sector AI companies has created a new and unexpected tension between Silicon Valley and the national security establishment, argues the expert:

"Through entities such as the Chief Digital and Artificial Intelligence Office, the United States has increasingly sought to integrate frontier technologies into national security planning. In recent years, the office has awarded major contracts aimed at accelerating the development of large language models, advanced analytics, and autonomous systems capable of supporting both classified and unclassified operations. Many technology firms have attempted to place restrictions on how their systems can be used, particularly when it comes to lethal operations or surveillance. This friction is not new. In 2018, internal protests at Google forced the company to withdraw from military cooperation tied to Project Maven, illustrating how ethical concerns within the tech sector can directly affect defence policy."

Despite the rapid expansion of AI integration, even industry leaders caution that the technology remains far from ready to independently make battlefield decisions, he added:

"The emergence of fully autonomous weapons systems remains one of the most controversial issues in international security, as global institutions have yet to establish binding regulations governing machines capable of selecting and attacking targets independently. Artificial intelligence is increasingly transforming the speed, scale, and complexity of modern warfare. Instead of replacing soldiers, today’s AI systems function primarily as decision-support tools, analyzing massive data streams, identifying patterns invisible to human analysts, and offering commanders possible courses of action. In a battlefield environment where information arrives from satellites, drones, sensors, and electronic intercepts simultaneously, such analytical power can offer decisive advantages. If both sides rely on AI-assisted targeting and intelligence, conflicts could escalate faster than human diplomacy can react."

Mr. Colloway candidly expressed his concerns, pointing out that the quickening pace of military decision-making could lead to new risks:

"As AI systems compress the time required to evaluate threats and recommend responses, the window for human deliberation narrows. Military analysts increasingly warn that algorithm-driven warfare could shorten decision cycles to the point where escalation unfolds faster than diplomacy can respond. The most controversial question, however, concerns the future of fully autonomous weapons. Major military powers, including the United States, China, Russia, and Israel, are actively exploring technologies that could eventually enable machines to identify and strike targets without direct human approval. While international discussions within the United Nations have attempted to address the ethical implications of such systems, no binding global framework currently regulates their development. What is certain is that the battlefield of the twenty-first century will not be defined solely by soldiers, tanks, or missiles, but also by algorithms quietly processing data behind the scenes."

Here we are to serve you with news right now. It does not cost much, but worth your attention.

Choose to support open, independent, quality journalism and subscribe on a monthly basis.

By subscribing to our online newspaper, you can have full digital access to all news, analysis, and much more.

You can also follow AzerNEWS on Twitter @AzerNewsAz or Facebook @AzerNewsNewspaper

Thank you!